credit: metamorworks / shutterstock.com

In previous posts we have discussed different ways to apply differential privacy, each of which offers some compromise between privacy and utility. But what does “usefulness” mean, and how do we know we are preserving it? To discuss this topic, we are pleased to introduce another guest author on our blog series, Claire McKay Bowen, a leading information scientist for privacy and data security at the Urban Institute. Claire’s research focuses on the quality assessment of private data synthesis methods differentially and scientifically. In 2021, the Presidents Committee of the Statistical Associations identified it as an evolving leader in statistics due to its technical contribution and leadership to statistics and the privacy and confidentiality of data.

– Joseph near and David Dries

As a privacy researcher, I am often asked, “How do you ensure that any information or differential data that is publicly released will still produce valid results? How do you balance this against the risks of exposure or privacy needs?” Most people who ask these questions expect an unambiguous service index that perfectly evaluates the quality of any data, or public statistics, privately differentially. But, such an index does not exist in general.

Instead of looking for the ultimate service metric, we need to ask ourselves, “Who are the data providers or users of the data, and why will they use the data and statistics?” In other words, privacy researchers Should Consult and determine data quality metrics based on how other researchers, institutions, and government agencies will use the data and statistics.

However, determining which specific metrics to implement is in itself a whole area of research. It is not possible to predict all possible analyzes that data users may apply and ensure that the data will provide valid results for each of those analyzes. That means privacy researchers Should Have open discussions with data practitioners about the correct service metrics to evaluate the changing data quality, as each data set and use of this data is unique.

Service Indices

To provide some guidance, I will cover a number of usage metrics used by privacy researchers and data users. Note that these examples cover only a few many other types.

Summary Statistics

Many privacy researchers will first examine the summary statistics of anonymous data as a quick and easy starting point for assessing usefulness. Also, most data practitioners can easily understand and interpret summary statistics compared to other auxiliary metrics. For example, common summary statistics measure how much the published data preserves the counts, means, and correlations for each variable or combination of variables. The privacy expert then reports the distance between the original results and the noisy results. Typically, these distance indices are bias and error in the average square of a root.

As an example, for the 2020 census, e The United States Census Bureau has announced the following means of service And how they defined them:

- Means a complete error,

- Intentional numerical error,

- Error in square root mean,

- Absolute error rate,

- Coefficient of variance,

- A complete mistake of stocks, and

- Percentage differences equal to the absolute difference count in percent above a certain threshold

An average absolute error, for example, is “the average absolute value of the count difference for a given data” and is calculated as the absolute value of the difference between the anonymous data and the results of the confidential data. These metrics are averaged across geographies, since most data practitioners want these values in certain geographies, such as Commander-kits.

Outcome-specific surgeries

Privacy researchers should then ask data users what analytics they typically implement as an additional measure of data quality. The idea is that data users should make similar decisions regardless of whether the analysis output came from the changed data or from the confidential data. The privacy community treats this type of service metric as an outcome-specific metric.

For example, many public policy research institutions, such as the Municipal Institute, apply microsimulation models to determine how new tax policy programs will affect Americans. These models first estimate a baseline from the current economic conditions in the United States and then compute a counter-assessment or alternative assessment based on the proposed change in the policy plan. The difference between the baseline and the counter-assessments reveals the impact of the public policy program.

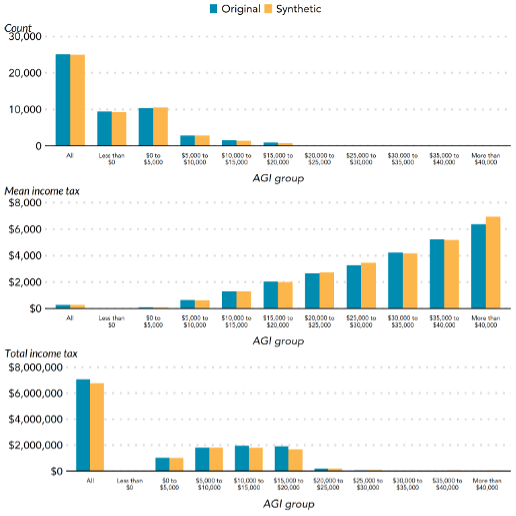

In 2020, the Municipal Institute collaborated with the Income Statistics Division of the Tax Authority to create Synthetic data array (I.e., pseudo-records that statistically represent the original data) and implemented a tax micro-simulation model to evaluate the quality of the synthetic file. The model calculated the adjusted gross income estimate, personal exemptions, deductions, ordinary income tax and capital gains tax and long-term dividends on confidential and odd data. Figure 1 shows the results for calculating the adjusted gross income, with the results from the original and synthetic data close.

Figure 1. The results for the calculation of the gross income groups adjusted for the various AD, average income tax and total income tax on the basis of the original and synthetic tax data from Bowen et al. (2020). AGI represents adjusted gross income.

Similarly, in 2021 the Municipal Institute conducted extensive research Feasibility test On a number of privately differentiated methods for publishing tabular statistics, mean and quantitative statistics, and regression analyzes with cross-sectional data. They focused on these types of surgeries based on informal interviews with a number of tax experts. Also from these interviews, they evaluated the private methods differentially based on their impact on analyzes of public policy decisions. Finally, the authors indicated which methods work in theory with specific conditions, but will encounter actual problems when consulting tax experts.

Marginal distribution indices

Another approach is to measure the distribution distance between the original data and the changed data. Some privacy researchers apply the square qi test to categorical variables and the Kolmogorov-Smirnov test to continuous variables. As an example, Bowen and Snoke (2021) compared several Differential private synthetics Data for vista Challenging Synthetic Data for Differential Privacy for 2018. Specifically, the authors reported the p-value as a measure of distance without a scale and not as a test of the significance of the traditional zero hypothesis, with a higher p-value indicating a higher benefit. This adjustment allows researchers to compare the original and private synthetic data differentially, with a different number of observations and variables with a different scale.

Global service indices

The use of global auxiliary metrics or diagnostic-based algorithms is another way of evaluating data quality that is becoming more popular in the literature, but is not commonly used or adopted by any federal agency (as far as this writer is aware). Global service metrics try to measure how close or similar the anonymous data is to the confidential data.

A simple example would be a comparison between two anonymous data sets for education, one with 10 percent of records having an “less than high school” education and one with 13 percent, with the first being closer to the 9 percent confidential data value. Most global service indices compare against a number of variables rather than one or two.

At a high level, global service indices first combine the confidential data with the data released to the public and mark each record as being from the confidential data or from the anonymous public data. Next, the privacy researcher must decide which classification model to use to distinguish whether the record is from the confidential or anonymous data. If the classification model “has difficulty” assigning a record to confidential or public data, privacy researchers assume that the two data sets are similar. More precisely, each record is given a probability to be classified as being from the confidential data or from the public data. A probability close to 50 percent means that the classification model can not predict better than a currency reversal.

Finally, according to the Global Benefit Index, the method distills these probabilities into a single value or multiple values to convey how similar the published data is to the original data. The “accuracy” depends on which classification model privacy experts use, since each classification model will measure different properties of the data. Privacy researchers need to conduct further scientific research to fully understand these differences. They also need to explore how to make these methods more computationally efficient for the average data user.

Software tools

What about a code for implementing these tools? Unfortunately, very few software tools exist to implement these service metrics. One is Syntap, An R package that produces synthetic data of individual-level data that have several benefit metrics, such as a global benefit metric called the pMSE-ratio. The privacy researchers who conducted additional service indices for the 2018 NIST Differential Privacy Data Synthetic Challenge Post their open source code on GitHub, But far from being strong, software-quality code. Although there is a demand for better service metrics software, part of the challenge in developing these tools is a little funding and time to support this type of work.

Remove

It is impossible for privacy researchers and data practitioners to ensure that the published data and statistics provide valid results for them. All surgeries. This is why they implement a suite of service metrics to assess the quality of private data and statistics differentially. They are also trying to focus on some key analyzes based on the needs of data users, in order to get a more informative assessment of data quality and usability. These steps and constant conversations with data practitioners are necessary because there is no “one size fits all”.

Comes next

In this post we discussed a number of differential privacy reference metrics that can be applied in a variety of use cases. In the next post we will return our attention to a particular use case: learning a private machine differentially.

This post is part of a series on Differential Privacy. For more information and see all the posts published to date on Page of the blog series of differential privacy In the space of cooperation in NIST’s privacy engineering.